Product

An AI Visibility Operating System built around the full operating loop

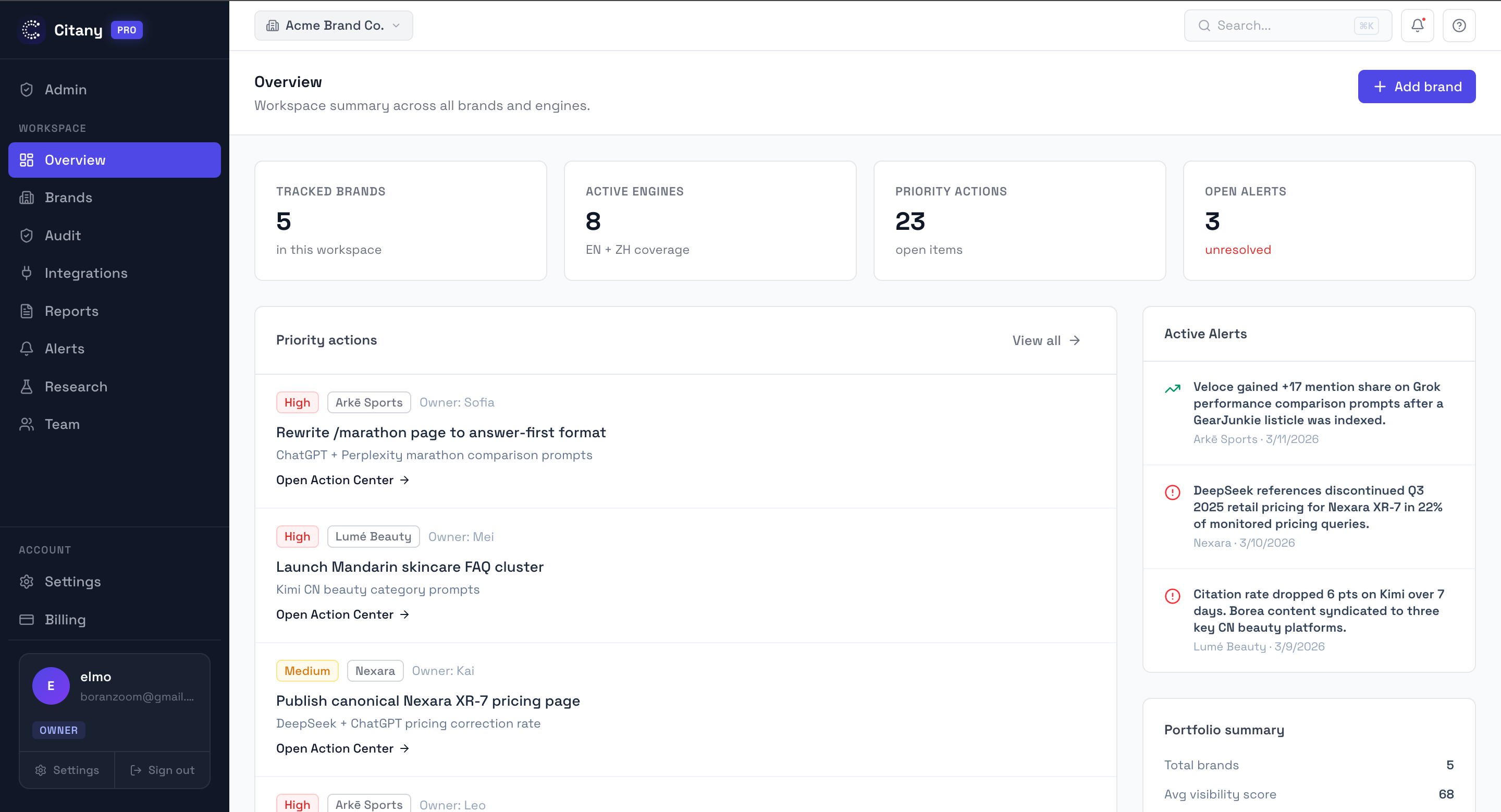

Citany turns AEO and GEO work into a system your team can actually run: define demand, monitor answer engines, diagnose citation gaps, act on the causes, verify movement, and report clearly.

AI Visibility OS

A full workflow from discovery to reporting

Citany is organized around the operating loop that cross-border teams actually run: define prompt demand, discover brand risks, monitor answer engines, diagnose citation gaps, act on the causes, verify movement, and report clearly.

Prompt Research

Map the queries people actually ask when discovering your category. Find the high-intent prompts your brand is missing — before competitors lock them up.

Multi-Engine Monitoring

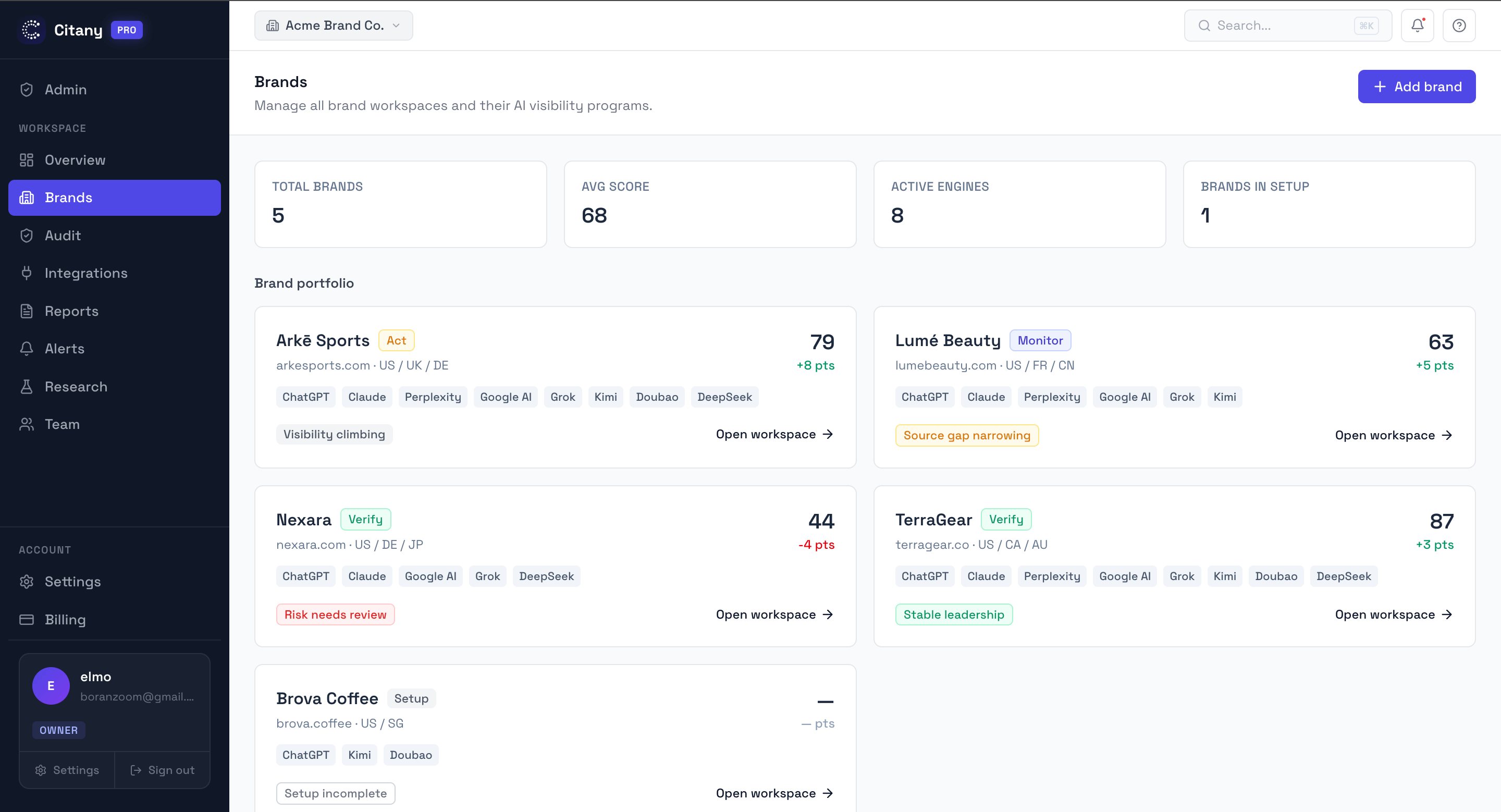

Track whether AI mentions your brand, what position it appears in, and which competitors get recommended first — across 8 supported AI engine paths.

Citation Intelligence

See exactly which URLs, domains, and content types AI cites when answering prompts in your category. Understand the source gap, not just the mention gap.

Competitor Gap Analysis

Find the specific prompts where competitors win and you don't. Understand whether they win because of better content, stronger review coverage, or clearer category framing.

Action Center

Convert diagnosis into a prioritized task list: which page to rewrite, which comparison asset to build, which third-party review gap to close first.

Visibility Reports

Generate clean PDF reports showing AI mention trends, citation changes, and action progress — for internal teams or white-label agency delivery.

What problem the product is meant to solve

Most teams already have analytics, Search Console, and some form of SEO reporting. What they do not have is a reliable way to answer six basic questions about AI visibility: Are we being mentioned, in which prompts, by which engines, because of which sources, versus which competitors, and what should we do next?

That is why Citany is not positioned as a dashboard that simply adds another score. It is an operating system for a new layer of demand: answer engines that shape recommendations before users ever reach your site.

What teams can do inside the product

- Create brand workspaces with competitors, languages, markets, and engine coverage.

- Track brand mention rate, citation rate, answer position, and source mix over time.

- See why competitors are cited through prompt-level and source-level breakdowns.

- Turn findings into action items for content, PR, entity cleanup, and page structure work.

- Export clear recurring reports without rebuilding the story every month.

Where teams usually get stuck without a system

- They only check branded prompts, so they miss the moments when people discover competitors first.

- They know AI is mentioning competitors but cannot tell whether the reason is page structure, weak reviews, poor category framing, or thin third-party evidence.

- They collect screenshots from different engines but have no consistent way to compare markets, prompt clusters, or month-over-month movement.

- They publish more content without knowing which page type or source type would actually change the answer.

What a useful first 30 days should look like

Week 1: baseline the market

Define the brand, competitors, markets, and prompt clusters so the team stops guessing about where the problem actually sits.

Week 2: identify source and topic gaps

Find which prompts mention competitors, which sources power those answers, and which owned assets are missing or weak.

Week 3: ship focused fixes

Prioritize the highest-leverage pages, FAQ blocks, comparison assets, entity cleanup, and third-party proof work first.

Week 4: verify and report

Check whether answers move, whether citations change, and whether the team now has a repeatable monthly operating loop.

What a team should expect as an outcome

A good AI visibility workflow does not promise instant dominance across every engine. It should give the team a reliable map of where they are invisible, where AI is getting the brand wrong, where competitors have source advantage, and what to fix first.

That clarity is what turns AEO and GEO from vague experimentation into a measurable operating program.

Frequently asked questions

Common questions about this workflow, use case, or research area.

What AI engines does Citany monitor?

Citany supports 8 AI engine paths: ChatGPT, Claude, Grok, Gemini, Perplexity, DeepSeek, Kimi, and Doubao. Evidence quality varies by engine and measurement mode, so results should be interpreted path by path rather than as eight identical consumer surfaces.

How is Citany different from tools like Otterly, Profound, or AthenaHQ?

Most existing tools cover global mainstream models only. Citany also monitors Kimi and Doubao — the localized AI engines dominant in Asian markets — mapping the unique platform sources (Zhihu, Xiaohongshu) they draw citations from.

What does the free brand audit include?

You submit your brand URL and up to three competitors. Citany runs a one-time, market-adaptive 3-engine baseline diagnostic and delivers a structured report within 2 business days. It is a diagnostic offer, not a product trial.

My Google rankings look fine. Why does AI visibility matter?

Google search and AI search pull from different source ecosystems. Research shows only 8% URL overlap between top Google results and what ChatGPT cites. Ranking well on Google does not mean AI engines recommend your brand — they often cite review sites, directories, and comparison pages that never rank on page one.

Why do localized ecosystems need separate monitoring?

Kimi and Doubao cite completely different platforms than global models — Zhihu, Xiaohongshu, and Douyin instead of Reddit, G2, and LinkedIn. If your brand is invisible in these localized engines, you are missing the AI-driven discovery layer for Asian-market buyers.

Do you manipulate AI prompts to force your brand to appear?

No. Citany measures how AI engines respond to neutral, unbranded category and comparison prompts — the same way a real buyer would phrase them. The goal is honest diagnosis, not manufactured visibility.

If AI keeps citing my competitors instead of me, what do I fix first?

Start with Citation Intelligence — it shows which specific pages and domains AI trusts in your category. Usually the fix is a missing comparison page, a weak review presence, or unclear category language on your own site. Citany's Action Center turns those findings into a specific task list.

Related pages

Explore the next-most relevant product, solution, or research page.

Next Step

Check your own brand against these patterns

If this page matches what you are seeing, run a free audit to review prompt coverage, competitor gaps, and the sources shaping AI answers in your category.