Product Module

Monitor brand mentions in ChatGPT, Perplexity, and 6 other AI engines

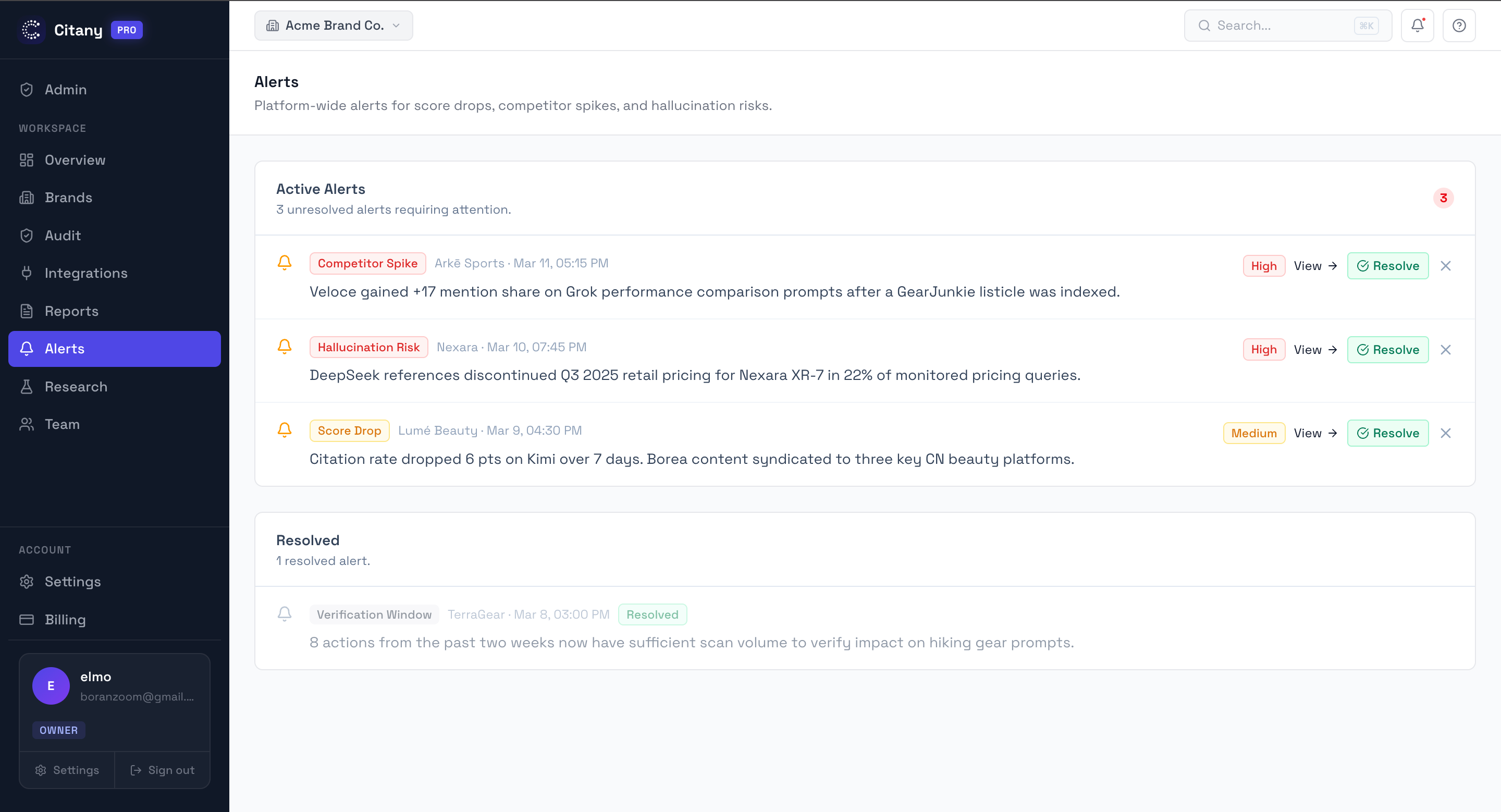

Monitor whether AI engines mention your brand, which competitors outrank you, and which sources shape the answer. Covers ChatGPT, Perplexity, Gemini, Claude, Grok, DeepSeek, Kimi, and Doubao.

What AI monitoring should answer that analytics cannot

Analytics can tell you what happened after users reached the site. AI monitoring answers what happened before that: whether the brand was recommended, whether a competitor appeared first, which sources were trusted, and how that differs by engine and market.

That difference matters because many teams misread AI visibility as a traffic problem. In practice it is often a recommendation, source-trust, and category-framing problem.

What gets measured

- Brand appearance or absence in each AI answer

- Answer position and relative competitor ordering

- Cited domains and URLs used as evidence

- Changes over time by engine, prompt cluster, and market

What teams usually miss without this layer

Search Console does not tell you how often ChatGPT or Perplexity recommends your brand. AI monitoring fills that blind spot with direct observation.

For cross-border teams, the problem is bigger because English and Chinese ecosystems often surface different sources, platforms, and competitors.

How to use monitoring data responsibly

Look for patterns, not one-off screenshots

A single answer is not strategy. What matters is repeated absence, repeated competitor advantage, and repeated source preference across a useful prompt set.

Pair visibility with source evidence

A low mention rate matters more when the same competitor pages and publishers keep winning the answer.

Track by prompt cluster and market

A brand may look healthy on branded prompts but weak on category prompts, or strong in one ecosystem but absent in another.

Mention Pattern Matrix

Last 30 days14 prompts · 4 engines

What a strong first month looks like

- You know which engines and prompt clusters matter most for your category.

- You can explain where competitors are winning and why.

- You can separate visibility issues from source-trust issues instead of treating them as the same problem.

- You have a stable baseline for measuring whether future fixes actually work.

Frequently asked questions

Common questions about this workflow, use case, or research area.

How often should teams review AI monitoring data?

For most teams, weekly review is enough for new changes and monthly review is enough for trend reporting. The point is consistency, not constant checking.

Is mention rate enough on its own?

No. Mention rate becomes much more useful when paired with answer position, cited sources, competitor ordering, and prompt cluster context.

Related pages

Explore the next-most relevant product, solution, or research page.

Next Step

Check your own brand against these patterns

If this page matches what you are seeing, run a free audit to review prompt coverage, competitor gaps, and the sources shaping AI answers in your category.