Day 2. I ran the first manual AI engine test across ChatGPT, Perplexity, DeepSeek, and Kimi. Citany was not mentioned by any of them. This is exactly what I expected — and it is the most valuable data point of the entire challenge.

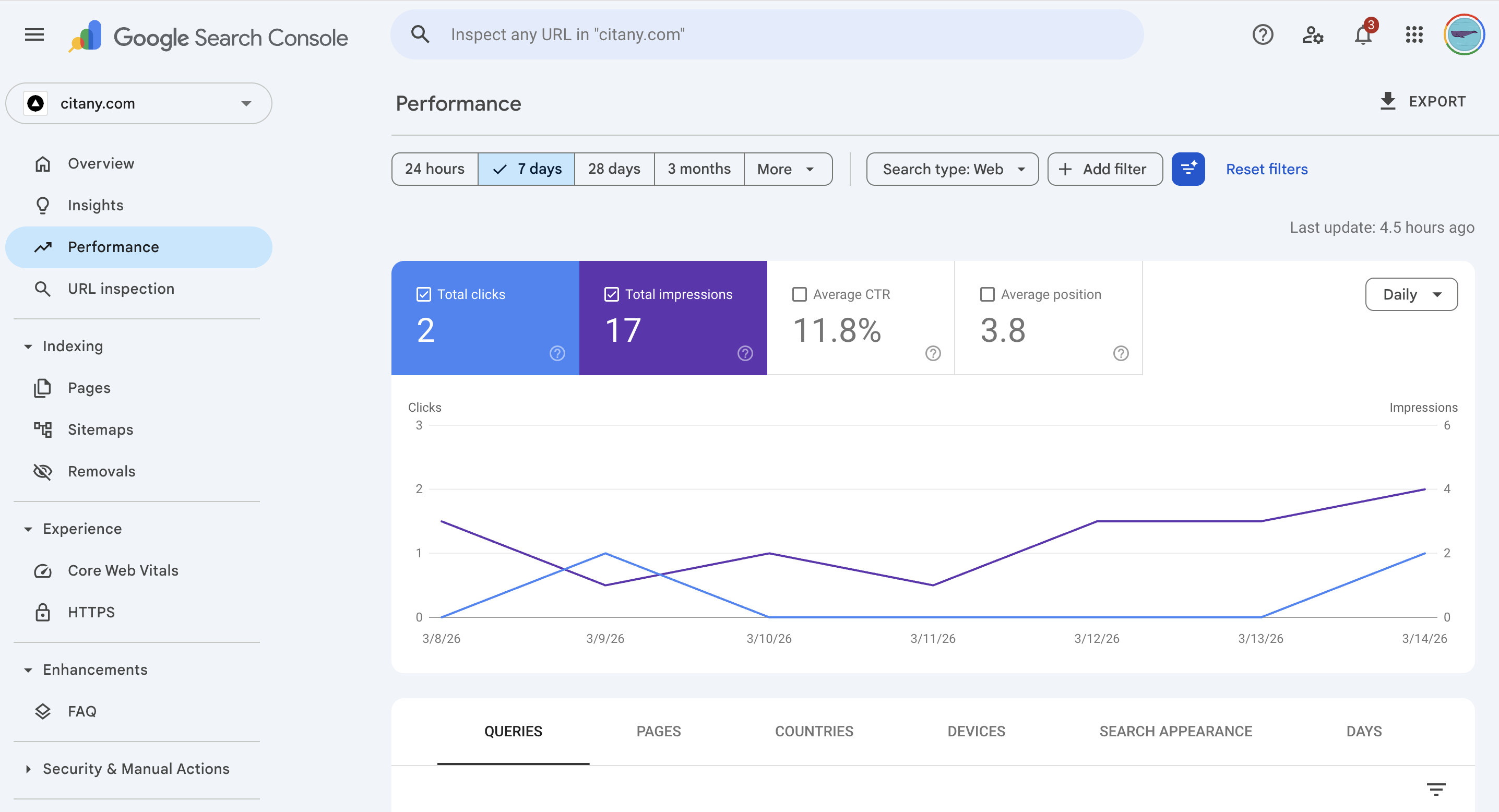

First: the GSC numbers

48 hours after launch, Google Search Console is showing real activity. Not traffic — but signal.

25+

Pages indexed

site:citany.com

17

Impressions (7d)

From zero on Mar 14

2

Clicks (7d)

Brand word 'citany', pos 3.8

14

Impressions (24h)

Avg position 60.6

25 pages indexed in 48 hours is faster than expected. More importantly, five non-brand queries are already generating impressions — including one conversational long-tail: "what solutions help me monitor brand mentions across multiple AI platforms automatically?" That exact phrasing is what AEO-optimized content is supposed to capture. It is showing up on Day 2.

The AI engine test: methodology

The test is simple and repeatable. I open each engine in a private/incognito window with no account history, ask a category-level question about brand monitoring tools, and record the full response. No prompt engineering. No leading questions. The goal is to capture what a real buyer would see.

Queries used today:

- ChatGPT: "What are the best tools to monitor brand mentions in AI search?"

- Perplexity: "Tools to monitor brand mentions in AI search engines"

- DeepSeek: "AI品牌监控工具推荐"

- Kimi: "如何监控品牌在AI搜索中的表现"

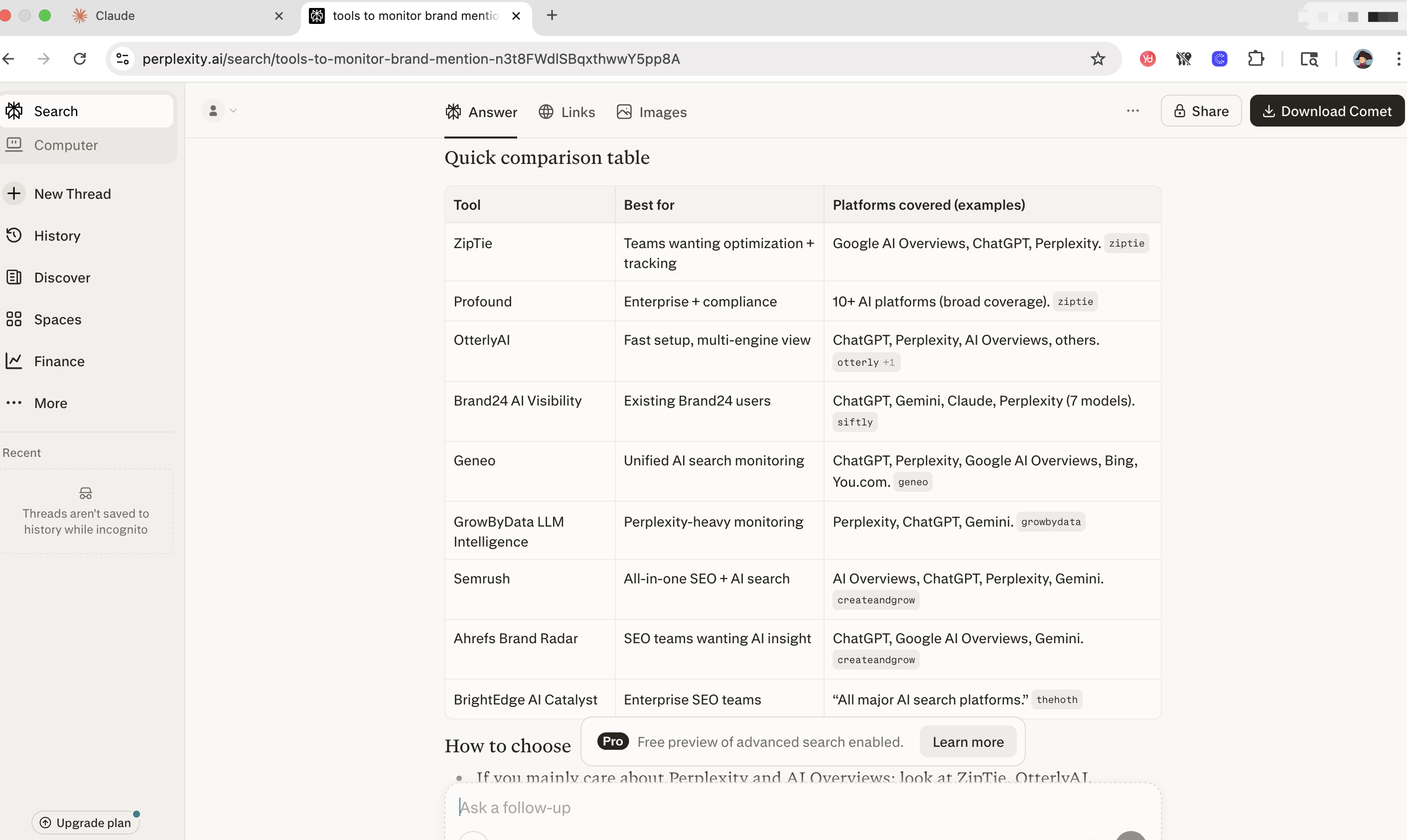

Results: Day 2 baseline

| Engine | Citany mentioned? | Tools that appeared |

|---|---|---|

| ChatGPT | ✗ No | Brandwatch, Meltwater, Brand24, Ahrefs Brand Radar, Otterly, Profound |

| Perplexity | ✗ No | Profound, OtterlyAI, Brand24 AI Visibility, Semrush, Ahrefs Brand Radar, BrightEdge AI Catalyst |

| DeepSeek | ✗ No | Meltwater, Ahrefs Brand Radar, Brand24, Semrush, Chatbeat + several Chinese-market tools |

| Kimi | ✗ No | Profound, Brandventory, Ahrefs, SEMrush, 慧科讯业 |

Zero for four. The challenge is officially on.

What the competitive data tells us

The tools that appeared consistently across multiple engines are worth noting:

- Ahrefs Brand Radar — appeared in 3 of 4 engines. Strong domain authority, established brand signal.

- Profound — appeared in ChatGPT, Perplexity, and Kimi. Enterprise positioning and significant press coverage giving them citation depth.

- Otterly — appeared in ChatGPT. Smaller tool but early in the AEO monitoring category.

- Semrush — appeared in Perplexity and DeepSeek. Massive existing authority from the broader SEO category.

Two observations: First, no tool appeared in all four engines — the citation landscape is fragmented, especially between English and Chinese engines. Second, the Chinese engines (DeepSeek, Kimi) surface a completely different set of tools, with several local Chinese SaaS tools appearing alongside international ones. This is the gap Citany is built to fill.

What I did today to start changing this

Today I added structured data (JSON-LD schema) to all 8 Insights articles and all 5 blog posts. This was one of three known gaps identified in yesterday's full-site AEO audit.

Every article now exposes to AI crawlers:

Article/BlogPostingschema — content type, headline, descriptiondatePublishedanddateModified— freshness signals that Perplexity weights heavilyauthorandpublisher— source credibility signalsBreadcrumbList— page hierarchy (Home → Insights → Article)

The early content without schema acts as a control group. I will track whether pages indexed before schema vs. after schema show any difference in citation rate or GSC CTR over the next 30 days.

What to watch for on Day 7

The first real milestone check is Day 7 (March 21). By then I expect:

- GSC indexed pages to be above 30

- At least one non-brand query to move above position 50

- The perplexity cost article (currently at position 39.4) to push toward page 1

AI engine mentions at Day 7 are unlikely — building citation presence takes longer than a week. But if schema was picked up and content was re-crawled, it sets the foundation for mentions to start appearing in weeks 3–4.

I will post the Day 7 data next Friday. Follow @Xbrave_R on X for mid-week data threads.